The Unverified Negatives

In deployed diagnostic AI, asymmetric verification produces structural false-negative blindness that standard oversight cannot reach.

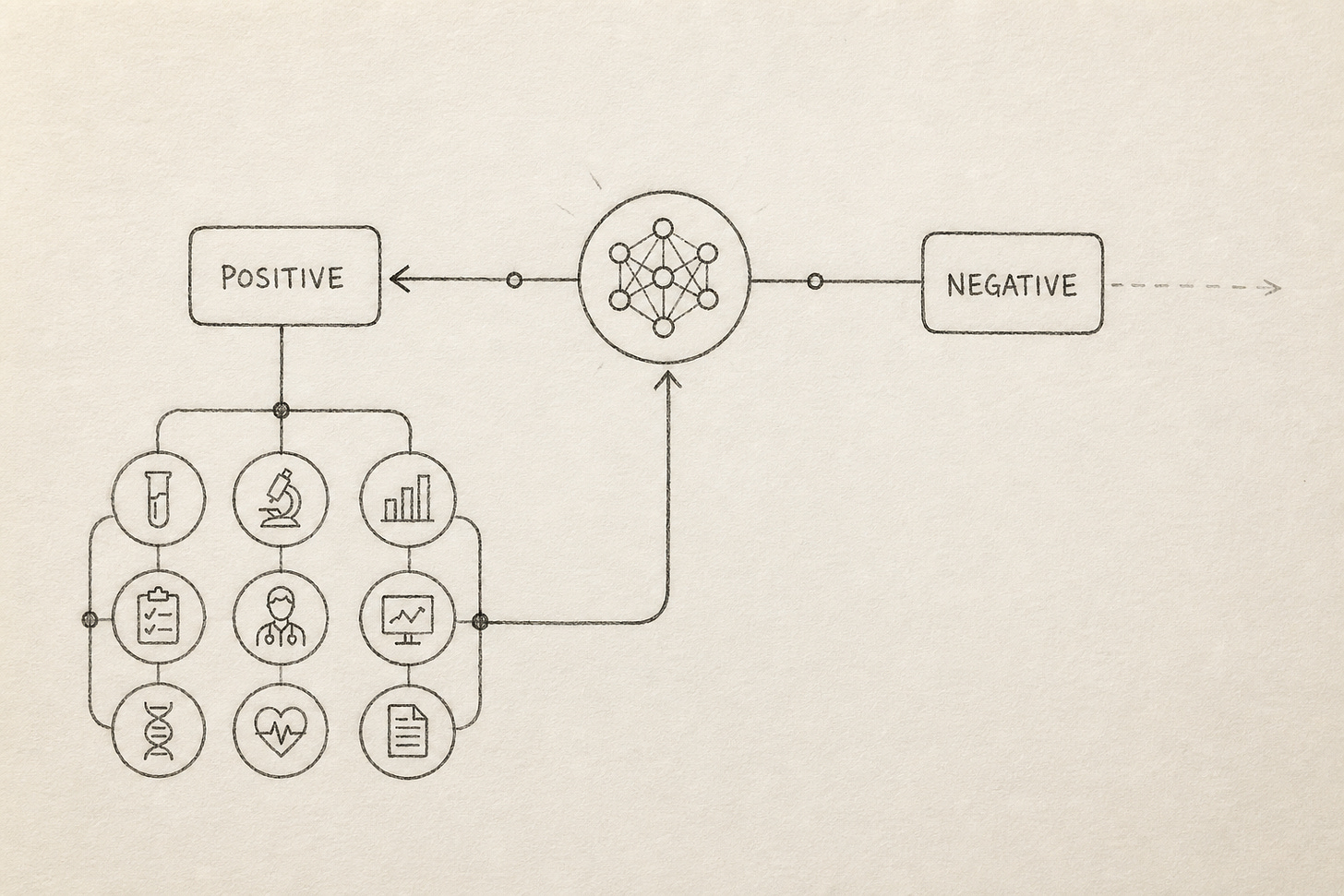

When AI is deployed in diagnostic settings, protocol logic routes positive outputs into a confirmation pathway and negative outputs out of the system entirely. The failure mode this produces is the unverified negative: a clearance that exits without entering any pathway that could reveal an error. This essay identifies unverified negatives as a distinct failure class produced by asymmetric verification, a deployment structure in which only positive outputs are verified, and demonstrates that standard AI safety oversight mechanisms cannot reach it, because the protocol structurally cannot generate the signal they require. The failure class is grounded empirically in programmatic data from AI tuberculosis screening deployed at national scale across high-burden countries.

Part One: The Failure Class

The threat model

Conditions: The AI operates as the terminal decision node on the negative side, and negatives exit the system entirely with no protocol mechanism (routine follow-up, sentinel sampling, random audit) that routes a cleared subject back into a pathway that could reveal an error.

Asymmetric verification: only alarms are verified, silence is not.

Use: AI screening replaces radiologists in high-burden TB programmes, clearing patients at scale without confirmatory follow-up on negative outputs.

Harm: A positive case flagged negative by the AI returns home without a treatment plan, deteriorates, and transmits disease to the household.

Impact: Missed cases accumulate in the community. When the patient eventually re-enters the health system, it is as a more advanced case, compounding transmission burden and treatment costs.

In high-stakes diagnostic settings, the unverified negative does not limit its harm to one subject. A missed infectious case is cleared into the community. The scale of the miss extends to everyone that patient reaches before the infection surfaces elsewhere in the system. This is what makes the unverified negative rate in this failure class not just unknown but consequential at population scale.

A false positive enters a confirmation pathway and is eventually corrected, at cost and with delay, but it enters a system that can act on it. An unverified negative does not. The subject is cleared, exits the system, and generates no downstream signal. No incident is reported because no incident is recognized.

Why standard AI safety oversight cannot reach It

Sterz et. al (2024) has begun specifying the conditions under which human oversight is meaningful rather than nominal. The oversight person has to have (a) sufficient causal power with regard to the system and its effects, (b) suitable epistemic access to relevant aspects of the situation, (c) self-control, and (d) fitting intentions for their role. Asymmetric verification eliminates that condition at the protocol level: the negative clearance never enters a pathway a reviewer can act on.

The resilience cycle framework’s four defense interventions (monitoring, human oversight, escalation protocols, and comparative auditing) each presupposes a verification comparator: an outcome signal the defense mechanism can act on. Asymmetric verification removes that signal before any intervention engages.

The Case Study (Tuberculosis AI)

The World Health Organization endorsed the use of AI to read chest X-rays for tuberculosis in 2021. Three commercially available tools (CAD4TB, qXR, and Lunit INSIGHT) had been independently evaluated on tens of thousands of chest X-rays. Their accuracy was broadly comparable to that of board-certified radiologists on controlled validation sets. The deployment that followed is now operating at national scale across high-burden countries.

The positive pathway is intact: AI flags an abnormality, a health worker collects sputum, GeneXpert provides molecular confirmation. Symptomatic patients are classified as Presumptive TB regardless of AI score and receive further workup. Household and close contacts of confirmed TB cases are not discharged on a negative AI screen. Patients living with HIV receive aggressive monitoring. Additional safeguards apply to every named high-risk subgroup.

One pathway remains structurally open across every implementation of this protocol. The asymptomatic, HIV-negative patient with no known TB contact, screened during community Active Case Finding, scored below threshold by the AI, is cleared and sent home. No sputum is collected. No follow-up is triggered. No mechanism exists to know whether that clearance was correct. That patient is what this essay calls an unverified negative.

The scale of unverified negatives

The asymmetric verification structure is documented across at least three countries: South Africa, Pakistan, and the Philippines. The Philippine data provides the most granular confirmation. Marquez et al. (2025) evaluated AI TB screening across four regions over three years and identified 40,486 individuals that were CAD-negative and symptom-negative and received no confirmatory testing.

Two factors are reinforcing asymmetric verification in real deployments:

GeneXpert cartridges are supply-constrained, and testing all CAD-negative, symptom-negative patients would consume the entire cartridge budget, defeating the resource-conservation purpose of AI screening.

The WHO/TDR calibration toolkit and the WHO 2021 consolidated screening guidelines address only the positive pathway (threshold setting, sensitivity targets, PPV optimisation, number-needed-to-test calculations) and specify no verification protocol for negative outputs. Programmes cannot require what the authorizing guidance does not specify.

How harm accumulates

First, the programme undermines itself. The TB programme deploys diagnostic AI during Active Case Finding precisely to find the asymptomatic, non-contact, HIV-negative patient — the population for whom early detection has the highest public health value, because pre-symptomatic TB is the transmission window. The population the programme exists to reach and the population the protocol leaves unverified are the same population.

Second, the AI failures become invisible to accountability. When notification rates underperform against targets, health system variables remain the available explanation, because programme data provides no mechanism to attribute the gap to the screening tool. The failure registers as programme underperformance, not AI failure.

Third, drift goes undetected. A model drifting from its deployment population would produce an increasing unverified negative rate. In a structure that cannot detect the drift, that trajectory is unobservable, and the gap between measured and true disease burden widens without producing a detectable signal.

Conclusion

Asymmetric verification is a structural property of the deployment, not a resource or implementation failure. Standard oversight mechanisms cannot reach it because the protocol cannot generate the signal they require. The failure class is not theoretical: programmatic data from AI tuberculosis screening across the Philippines, South Africa, and Pakistan documents the same gap in three independent deployments, with 40,486 unverified negative patients in the Philippine data alone.

The structural complement is verification symmetry: extending confirmatory testing to a defined sample of negative outputs provides the conditions to initiate the resilience cycle and meaningful human oversight. The points at which this requirement can be made enforceable already exist (e.g. WHO consolidated guideline revision cycles, the WHO/TDR calibration toolkit, Global Fund disbursement conditions, and ADB health system financing) each representing a procurement or authorization chokepoint where verification symmetry can be specified before deployment hardens the asymmetric structure into institutional baseline.

The author researches AI governance failure modes in LMIC health systems.